Context Engineering: The Industry’s Growing Awareness of Context’s Critical Role in AI

- Francois Embit

- Tech

- July 21, 2025

Why Context Is Suddenly in the Spotlight. Not long ago, building AI-powered applications often meant obsessing over prompt phrasing or fine-tuning models. But as organizations deploy increasingly complex AI agents—handling customer queries, automating workflows, or synthesizing vast knowledge bases—a new bottleneck has emerged: context.

Consider a virtual assistant tasked with booking travel, answering HR questions, and summarizing company policies. Despite using a state-of-the-art large language model (LLM), it flounders—missing key details, hallucinating answers, or failing to follow nuanced user instructions. The culprit? Not the model, but the information it receives: incomplete, irrelevant, or poorly structured.

This is the heart of context engineering—the discipline of ensuring AI systems have the right information, in the right format, at the right time. As the industry awakens to its importance, context engineering is rapidly becoming a cornerstone of reliable, scalable AI architectures.

This shift is echoed by industry leaders. Tobi Lutke, CEO of Shopify, succinctly captured the essence of the challenge in a recent tweet (source):

I really like the term ‘context engineering’ over prompt engineering. It describes the core skill better: the art of providing all the context for the task to be plausibly solvable by the LLM.

Andrej Karpathy, a prominent voice in AI, reinforced this sentiment (source):

+1 for ‘context engineering’ over ‘prompt engineering’. People associate prompts with short task descriptions you’d give an LLM in your day-to-day use. When in every industrial-strength LLM app, context engineering is the delicate art and science of filling the context window with just the right information for the next step…

From Prompts to Holistic Context: The Evolution

TL;DR / Key Takeaways

- Context engineering is now recognized as a critical factor in reliable, scalable AI systems—outstripping prompt engineering in importance for complex agentic architectures.

- Effective context engineering involves dynamic selection, compression, isolation, and structuring of information for LLMs.

- Industry and research agree: most failures in modern AI agents are context failures, not model failures.

- New tools and frameworks are emerging, but evaluation practices and professional standards are still catching up.

- Addressing context engineering requires technical, organizational, and ethical investment—spanning privacy, security, and skills development.

Beyond Prompt Engineering

Prompt engineering—crafting clever instructions for LLMs—was the first wave of user-driven AI optimization. But as tasks grew more complex and agents more autonomous, it became clear that prompts alone were insufficient.

Context engineering expands the scope:

- Prompt engineering focuses on how you ask.

- Context engineering governs what the model knows when it answers.

This shift reflects a broader understanding: LLMs are only as good as the context they’re given. As Philipp Schmid notes, “Most agent failures are now context failures, not model failures.” (PhilSchmid)

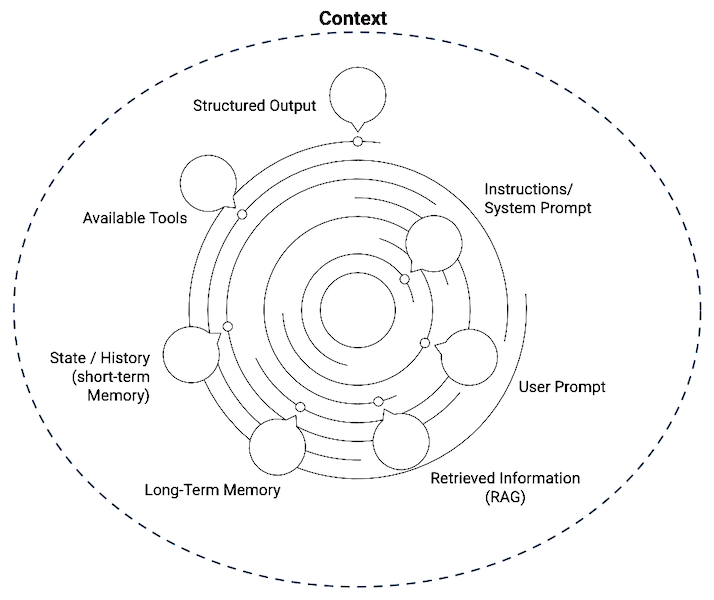

The Anatomy of Context

In modern AI systems, “context” can include:

- User inputs (current query, conversation history)

- External knowledge (documents, databases, APIs)

- Workflow state (previous steps, intermediate results)

- Tools and functions (code execution, calculators, search)

Context engineering orchestrates these elements, dynamically assembling the optimal “context window” for each LLM call.

Evidence from Industry and Research

Why Context Engineering Matters Now

The rise of agentic architectures—where LLMs act as autonomous agents or orchestrate multi-step workflows—has made context engineering indispensable. According to the LangChain team, “the limiting factor for agent reliability is no longer the model, but the quality and relevance of the context provided.”

Key drivers:

- Model improvements have outpaced context delivery. As LLMs become more capable, the risk of “garbage in, garbage out” grows.

- Context windows remain limited. Even the largest models can only process a finite amount of information per request, making selection and compression critical.

- Cost and latency pressures. Overloading context increases compute costs and slows responses; under-providing leads to poor performance.

Industry Examples

- Enterprise search assistants often rely on retrieval-augmented generation (RAG) to fetch relevant documents. Poor context selection leads to irrelevant or misleading answers, regardless of model quality.

- Multi-agent systems (e.g., in workflow automation) must manage context isolation, ensuring each agent receives only the information it needs—no more, no less.

- Customer service bots that maintain long-term memory (e.g., previous tickets, user preferences) require sophisticated context assembly to avoid forgetting or misusing information.

Academic Perspective

While most literature is practitioner-driven, a recent arXiv survey formalizes the field, highlighting context engineering as a “core bottleneck for reliable LLM-based systems” and calling for systematic evaluation and standardization.

What Growing Awareness Means for Our Workflows

Core Techniques and Strategies

Context Selection

- Goal: Filter and rank information to surface what’s most relevant.

- How: Use retrieval systems (vector search, keyword match), memory selection, or custom ranking algorithms.

- Example: An internal knowledge bot retrieves only the top 3 most relevant policy documents for a user’s question.

Context Compression

- Goal: Fit more information into the LLM’s context window.

- How: Summarize, prune, or chunk data; use extractive or abstractive summarization.

- Example: Summarizing a 20-page report into a 300-word executive summary for the model.

Context Isolation

- Goal: Prevent information leakage and manage complexity.

- How: Split context across sub-agents, use sandboxing, or state objects.

- Example: In a workflow, the “booking agent” only receives travel details, not unrelated HR data.

Structured Outputs

- Goal: Ensure consistency and machine-readability.

- How: Specify output formats (e.g., JSON, XML) in the context or prompt.

- Example: Requiring the LLM to return a structured itinerary, not free-form text.

Workflow Engineering

- Goal: Sequence LLM and non-LLM steps to optimize context at each stage.

- How: Orchestrate multi-step pipelines, manage state transitions, and trigger external tools.

- Example: A support bot that first classifies a ticket, then retrieves relevant FAQs, then drafts a response.

Addressing Challenges: Evaluation, Risks, and Skills

Evaluation and Observability

A recurring industry pain point is the lack of robust, standardized evaluation pipelines for context engineering. While practitioners agree on the need for systematic testing (e.g., measuring answer relevance, latency, cost), detailed methodologies are rare.

Best practices include:

- Logging context windows and LLM outputs for traceability

- A/B testing different context selection/compression strategies

- User feedback loops to refine context assembly

Risks and Limitations

- Privacy and Security: Context windows may inadvertently expose sensitive data. Context isolation and redaction are essential safeguards.

- Context Poisoning: Malicious or faulty context can mislead models, leading to unreliable or unsafe outputs.

- Tool-Centric Bias: Many frameworks promote their own context engineering paradigms, which may not generalize across use cases.

- Skill Gaps: As context engineering matures, organizations must invest in training or hiring for this emerging discipline.

Professionalization: The New Discipline

The shift from prompt to context engineering is more than a technical adjustment—it’s a professional evolution. Deep system thinking and cross-functional collaboration are becoming essential. Some question whether specialized roles are needed or if context engineering should be a core competency for all AI practitioners.

Next Steps for Teams and Organizations

As AI systems become more capable and agentic, context engineering is no longer a “nice-to-have”—it’s a foundational design principle. Teams should:

- Audit their current context pipelines: Where are failures occurring? Are models getting the right information at the right time?

- Invest in tooling and observability: Adopt frameworks that make context flows transparent and testable.

- Prioritize security and privacy: Build safeguards against context leakage and poisoning.

- Develop context engineering skills: Upskill existing teams or hire for this emerging discipline.

- Collaborate and standardize: Share best practices, contribute to open benchmarks, and push for industry-wide evaluation standards.

The industry’s growing awareness of context’s critical role in AI is more than a trend—it’s a reckoning. By embracing context engineering, we can unlock the next level of reliability, efficiency, and trust in AI-powered systems.

Further Reading

- The New Skill in AI is Not Prompting, It’s Context Engineering – Philipp Schmid

- Context Engineering for Agents – LangChain Blog

- Context Engineering for Agents – LangChain Video

- A Survey of Context Engineering for Large Language Models – arXiv

- Context Engineering - What it is, and techniques to consider – LlamaIndex

- How Long Contexts Fail - Drew Breunig